The stage show KÀ, a production by the Canadian circus troupe Cirque du Soleil, was directed by Robert Lepage. This production revolves around a storyline, which is quite unusual as Cirque productions are generally theme based. The projected images follow the course of this story and serve as virtual scenery.

It took more than two years of work leading up to the premiere in 2004 to develop the interactive projections that are generated by pure mathematical and physical calculations in real-time. The projections were revised slightly in 2012 and adapted to use more current technology. The advancement of computer technology made some image calculations possible that were not achievable at the time of the premiere of the show.

Among the projections are a rain of arrows, thundery clouds, sea waves, an underwater scene, stone and ice landscapes, glowing lianas of a forest, and caustics of an artificial water surface. Some of the original images can be seen in the 3D film production Cirque du Soleil: Worlds Away.

realization

It has been almost ten years since the premiere of KÀ, so I decided to finally release more information on the technology that drives the projections. I figure that by now, many people have found out the processes themselves and they should be open for everybody. Warning: it gets very technical. The following text presents some previously unreleased information on the technical aspects of the show.

main stage / projection mapping

A special feature of the system is the technique of projection mapping onto a stage that moves with several degrees of freedom. The images move with the 25'x50' (ca. 8x15m) main stage and appear almost as if they are painted onto it. For instance, lighting and shadows cast by a projected stone surface are calculated in real-time depending on the stage position as well as an interactive water surface which also follows the movement of the stage.

To achieve this, the stage, called the Sand Cliff Deck, and other parts of the theater were modeled true to scale in the computer. The position of the real stage is captured by sensors provided by the automation interlock system and controls a virtual counterpart. So, in addition to the real stage, there is a virtual stage inside the computer that moves exactly as the original one does.

In order for the projection mapping to come to realization, I had to place a virtual camera for every projector into the program. The virtual camera optics had to match the real projector optics exactly. Projecting the image that the virtual camera films of the virtual stage allows all of the pixels to fall in place, and it looks as if the things displayed on the virtual stage are happening on the real stage.

The biggest task was to solve the mathematics in order to calculate the camera projection matrix using only a relatively small number of reference points in the theater. In the initial attempt, the image stuttered and reacted to the stage movement with a certain delay. Every projection system has to fight with latency – the time difference between sensor input and image output – or the refresh rate of the sensor data. In this case, both held true. The final solution was to predict the position of the stage some milliseconds into the future and to smooth those values using a Kalman-Filter.

For the physical projection, there are three large-venue DLP projectors that overlay all of the images; a process known as "stacking". To converge all three projected images exactly onto the moving stage in three-dimensional space, every projector has to shape the image a little differently. A certain depth-of-field blur remains since the projectors can only physically focus on a plane parallel to the lens.

The proscenium is modeled as a three-dimensional black mask in the projection software in order to avoid any image being projected on it. On top of this, the black portion of a DLP projected image is never completely black, but rather a dark grey called "video black". To hide the edge of the projected area in very darkly lit scenes, controllable dowsers are attached in front of the projectors. They can be closed gradually in order to softly blend the video black at the borders of the image, or completely hide it when no image is projected.

interactivity / sensors

Thanks to the Canadian inventor Philippe Jean of Les Ateliers Numériques, the movable stage floor was equipped with capacitive sensors. The whole stage, to put it simply, becomes a giant touch-sensitive display. Interactions with the acrobats and the stage surface are made possible – this is best seen in interactions of the acrobats' feet and bodies with the artificial water surface.

Infrared cameras, observing the near-infrared spectrum, are used for the interactivity of the underwater scene and the lianas (vines) of the forest. Here, infrared floodlights are used to illuminate the performers, with their light being invisible to the audience. By this means, the interaction becomes independent from stage lighting. Even in darkness everything is visible to the camera and the interaction continues working.

The input images from the camera have to be warped to line up with the projected image. This is necessary since the positions and optics of infrared cameras and projectors differ. This way, movement viewed in the camera image will trigger a reaction at the correct position within the projected image. Dichroic filters are utilized to eliminate the infrared portion from the follow spots, so they do not cause interactions as well.

algorithmic images and physics

I almost exclusively use algorithms and simulations as means of expression within my work. The interaction can, in principle, control every behavior of the simulation and every parameter of its appearance. Often the single picture is not my main focus but rather the behavior of the image in time.

Behind the physical simulations of all images are mathematical constructs. Basic mathematical representations of the images within the current version of the show, updated in 2012, are:

- a particle system for the rain of arrows

- multiple layers of density fields for the storm clouds

- audio analysis of thunder sounds for the lightning

- mirrored sine waves for the waves of the sea

- particles and 2.5D reflection calculations for the air bubbles

- overlaid, distorted fractals and realtime lighting calculations for stone and ice

- chains of elastic springs for the lianas

- fields of elastic springs and light refraction for the water surface

connectivity

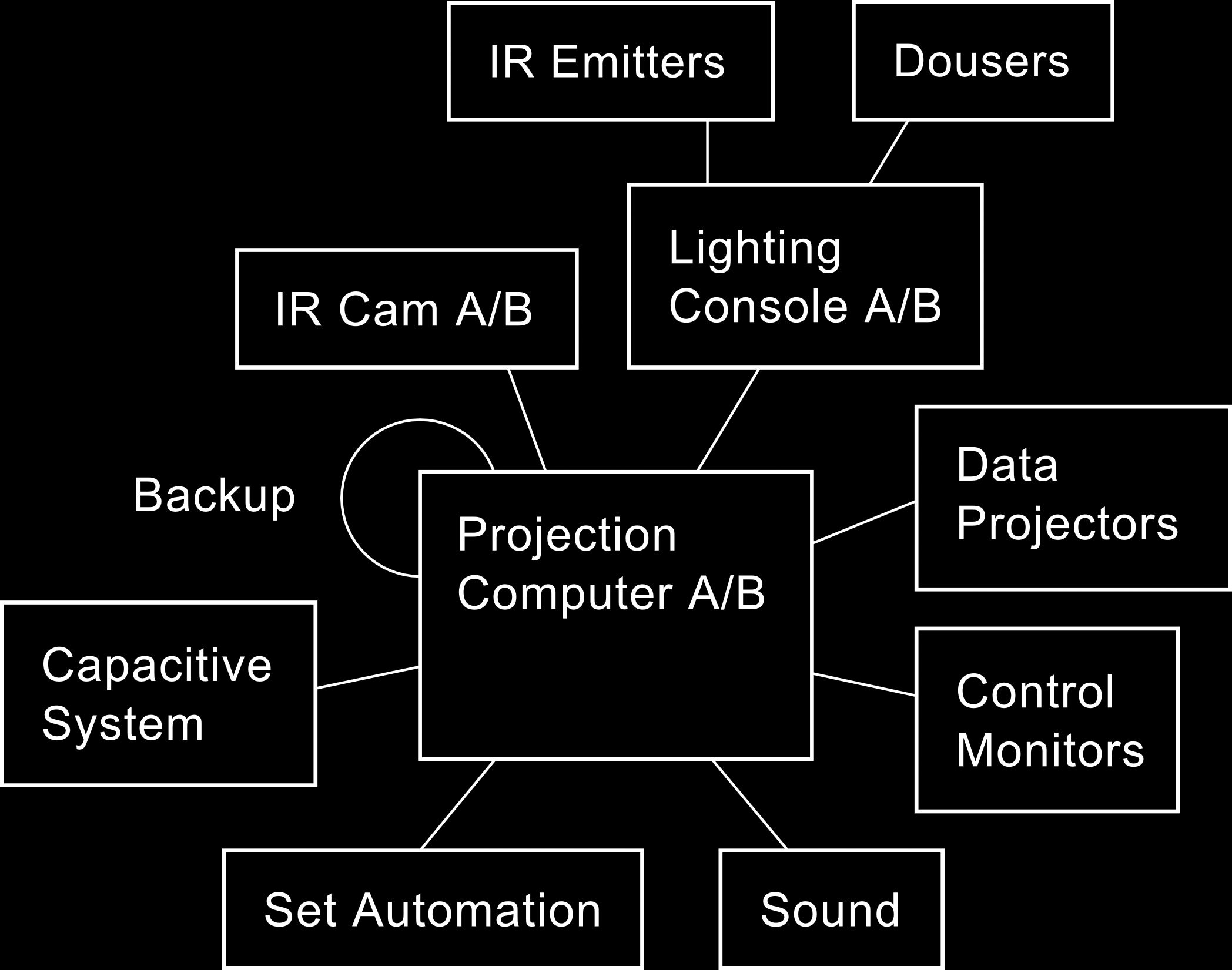

A networked interaction with other theater systems, known as "show control", heightens the presence of the images and their connected elements. For example, thunder from the sound department triggers lightning in the storm clouds. Also, the movement of acrobats underwater creates virtual air bubbles and triggers corresponding underwater sounds. The essential connected components of the projection system are shown in the following schematic diagram.

The operation of the projection system is controlled by a lighting desk. All essential system elements – the cameras, lighting desk, projection computers, and projectors – are redundant systems and thereby protected against a total breakdown. There is an automatic failover switching for the computers. This means that in case one system fails or freezes, the backup system automatically takes over. At any moment, the console operator can manually switch between systems should this be necessary.

For even more information on the technical background of the show, I'd like to point to the very competent and comprehensive articles by John Huntington in Lighting and Sound America as well as to this article by Davin Gaddy concerning the 2012 updates.

credits

creation 2004

My gratitude belongs to Guy Laliberté and Robert Lepage for being crazy and courageous enough to assign to me the role of projection designer. The project was a dozen times bigger than all projects that I had been involved with up until then. I want to thank Guy Caron and Stéphane Mongeau for their exceptional planning and taming of the overall project. Also, many thanks go to the team of creators of this show, for their close collaboration and cooperative working atmosphere. The creative team was:

| Guide | Guy Laliberté |

| Creator and Director | Robert Lepage |

| Set Designer | Mark Fisher |

| Costume Designer | Marie-Chantale Vaillancourt |

| Composer and Arranger | René Dupéré |

| Choreographer | Jaques Heim |

| Lighting Designer | Luc Lafortune |

| Sound Designer | Johnathan Deans |

| Interactive Projections Designer | Holger Förterer |

| Puppet Designer | Michael Curry |

| Props Designer | Patricia Ruel |

| Acrobatic Equipment and Rigging Designer | Jaque Paquin |

| Aerial Acrobatics Designer | André Simard |

I owe thanks to Madeleine Jean and Dominik Rinnhofer for their assistance, which supported me greatly during especially stressful times. Without them, some feats wouldn't have been pulled off that easily. For the creation of the software, I had the support of four very talented programmers: Peter Ibrahim, Mykel Brisson, Daniel Fournier, and Alain Trépanier. Steve Montambault governed the planning of the hardware. Special thanks go to Philippe Jean and Louis-Philippe Demers for the outrageous development of the giant capacitive system.

Thanks to the people in charge of the lighting department, which we were a part of as the projection team. In particular, Jeanette Farmer and Nils Becker for solutions to all the things that at first seemed impossible; to the lead projectionist Jon Mytyk, and to Tony Saurini for their close and productive teamwork during all the possible and impossible day and night times; also to all of the lighting and stage technicians, especially Liz Koch.

Thank you very much to Jörg Lemke for many hours of discussion with regard to artistic concepts and life. I would like to say thank you to Neilson Vignola, Anh-Dao Bui, and Simon Lemieux for their collaboration. Last, but not least, to Jeremy Hodgson from automation, and to Nol Van Genuchten for his help during the planning phase of lighting.

Because such a big project can become more than a little confusing, I sincerely apologize to everyone whom I may have forgotten to mention in this long list.

revisited version 2012

Thank you to the new lead projectionist, Davin Gaddy, for the extremely uncomplicated collaboration and his many good ideas. Thanks to Nils Becker and Chris Kortum for the planning and supervision of the implementation and for fast help when some things did not work right away. I'd also like to thank the technical director Erik Walstad and the artistic director Marie-Hélène Gagnon for their helpful support.

I owe a lot of gratitude to Christian Wening and his team for their, as usual, competent hardware research and for building the new projection computers.

Finally, many thanks to Davin Gaddy for reviewing the English text of this documentation.