Sound is all around us, always. I wanted to create an installation that would allow the visitor to hear the ordinary world with new ears for a moment.

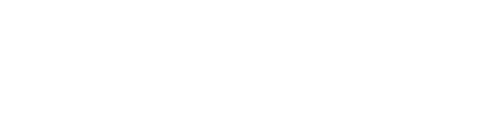

The Sound of Things is an interactive sound installation. Ordinary things are lying on a table: a stack of papers, a wine glass, candles, a lamp. When the visitor puts on headphones, all items on the table start to sound to him. The observer can experience and explore this three-dimensional soundscape by moving his head and wandering about.

Most of the sounds were created by striking, tapping, rubbing and kneading the very things on the table. The sounds of some items are further augmented with noises that are acoustically or socially in close relationship with the respective object. For example, by getting close to the wine glass, the visitor is able to hear the noise of a celebration inside the glass.

A few sounds have been enriched by pareidolia. Pareidolia, according to Wikipedia (January 2013), is "a type of apophenia, seeing patterns in random data", for example seeing figures in clouds. I transcribed some of the sounds into German or English language, recorded the spoken words and then re-inserted them into the sounds by use of a vocoder.

realization

To track the position and rotation of the headphones I use three LEDs attached to the headphones in conjunction with eight infrared cameras and a custom motion-tracking-software. The USB-cameras are not synchronized. Since there are eight cameras, errors average out sufficiently and occlusions are seldom.

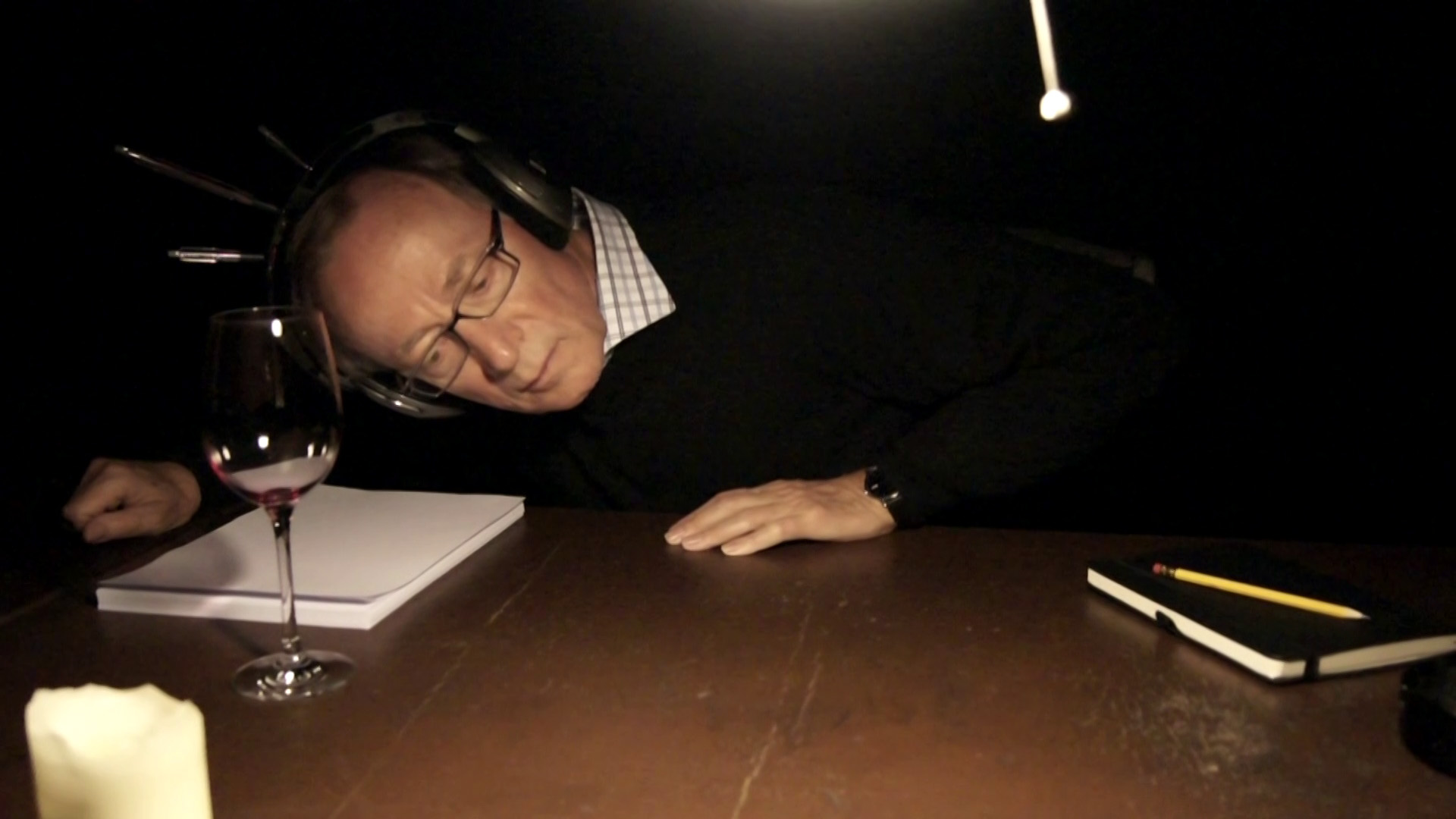

To make all sound sources appear at the correct positions in real space, I had to build a virtual copy of the table together with the objects standing on it. Reality and virtual elements were then overlaid by a calibration procedure. The result is an augmented reality scenario where the real world is enriched with virtual sounds.

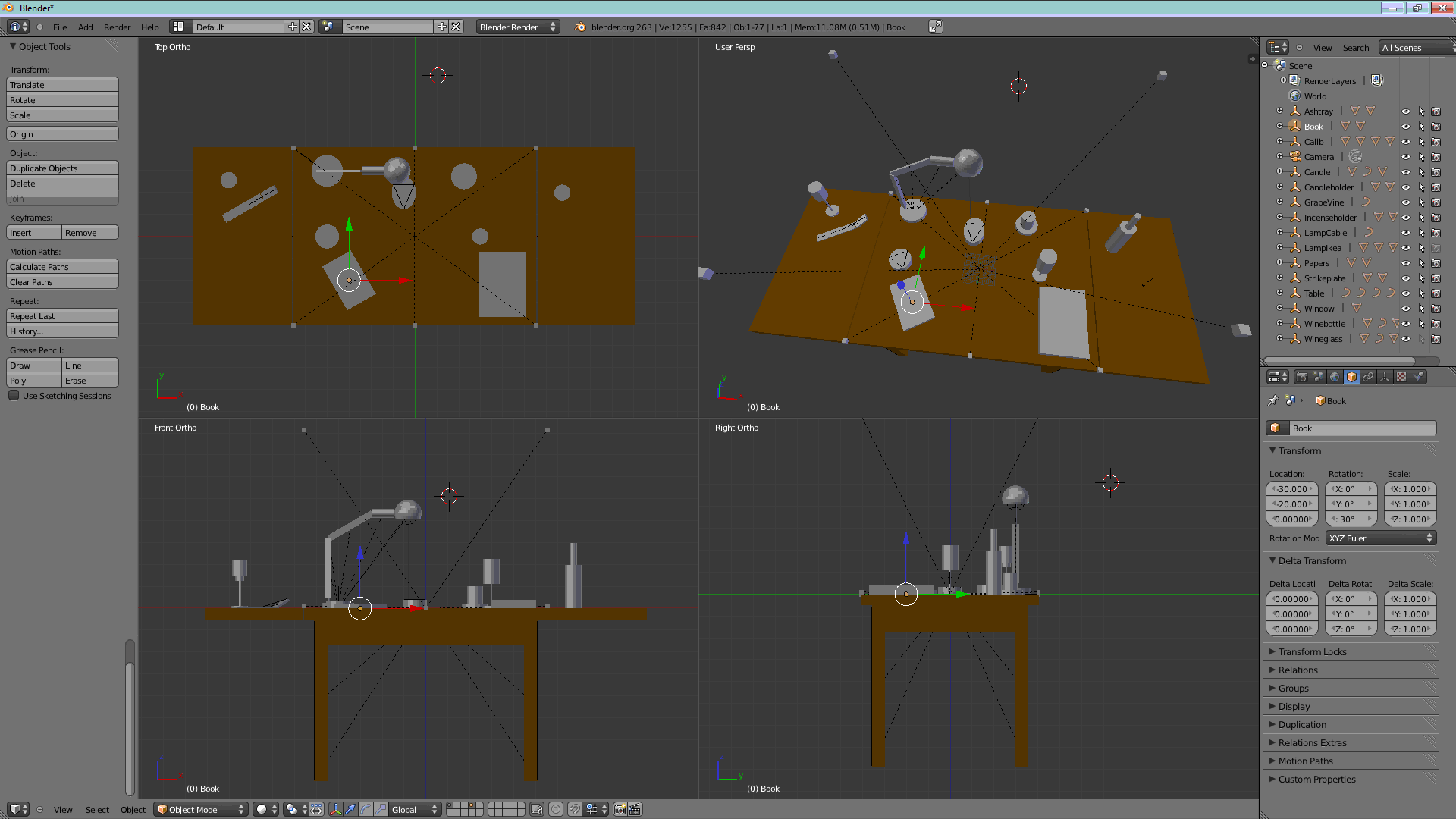

To achieve the spatial acoustics via headphones, I am using a combination of delays, filters, acoustic damping, reverb and so-called head-related-transfer-functions (HRTFs). By this means, the sounds seem to originate from the objects themselves. Below is a flow chart depicting the processing chain of a single sound source for a single ear.

Tracking and sound processing were both quite easy to parallelize. Both could be run on separate computers if necessary.

I used point sound sources, though always multiple sources per object: Sounds were mostly recorded in stereo on the table itself. Stereophonic sounds allowed me to achieve a richer sound by placing more than one sound source for an object. Recording the sound source on the table made sure that the room acoustics were quite close to reality when an ear moved close to the object.

credits

The Sound of Things was my final project a the Hochschule für Gestaltung Karlsruhe in 2013. I'd like to express my heartfelt thanks to everybody who supported me, especially:

| supervision | Prof. Michael Bielicky Ludger Pfanz |

| support in all respects | Lorenz Schwarz Insa Foce |

| hardware, LED technology | Christian Wening – Computer Consult Wening |

| support tracking | Paul Modler |

| headphone modification | Alex Wenger Andreas Lang |

| support recording | Frank Halbig |

| HfG studio | Sebastian Schäfer Andreas Beckert |

| Roland Merz – Comyk | |

| video advice | Frederik Busch |

| HRTF | Bill Gardner and Keith Martin – MIT Media Lab |

Tracking and sound generation software were both written by me in C++. During development I used the following libraries: OpenCV, OpenMP, OpenAL, OpenGL, Steinberg ASIO, CodeLaboratories CLEye SDK. I also used the HRTF database of the MIT, Cubase and Audacity to construct sound loops and Blender to build the virtual table model.